- Home

- About Us

- Work

- Journal

- Contact

- Free quickbooks crack download

- Atomix virtual dj pro 8 crack youtube

- Keep my notes app

- How do you download photos from iphone to pc

- Adobe premiere cc 2017 torrent mac

- How to host a modded minecraft server through hamachi

- Textwrangler download mac free

- Paid malwarebytes vs avast

- Open task manager shortcut win 10

- Free software convert flac to wav

- Filtering mac address xbox one

- Iphone data recovery for mac

- Itunes music player for pc

- Konica minolta 2400w driver for windows 7

- Microsoft outlook 2013 free download for windows 8-1 64 bit

- Songs pk a to z mp3 song download

- China mobile video player free download

- List of email providers

- Logisticregression python jupyter notebook

- Reasonable doubt jay z album

- Mp4 to mkv video converter free

- Silicon labs cp210x usb to uart bridge driver moonlander

- Install weka packagfes

- Finance app for mac

- Pioneer woman mac and cheese fancy

- Mac finder window not showing shared

- Rename files on mac terminal

- Dum laga ke haisha full movie download 720p

- Kung fu yoga movie full songs of free download

- Mac os x mavericks dmg

- In the deep movie review

- Sonic 1 megamix v4 0

- Kodak printer driver for windows 10

- Syx binary editor

- Battle of gods english dub torrent

- Truecaller app voicemail

- 8gb vs 16gb ram gaming benchmark

- The sims 4 reloaded afiygirl

- Mac what is xquartz

- How to set outlook as default mail client windows 7

- How to find mac specs by serial number

- Denoiser iii error

- Free download facetime app for iphone

- Home

- About Us

- Work

- Journal

- Contact

- Free quickbooks crack download

- Atomix virtual dj pro 8 crack youtube

- Keep my notes app

- How do you download photos from iphone to pc

- Adobe premiere cc 2017 torrent mac

- How to host a modded minecraft server through hamachi

- Textwrangler download mac free

- Paid malwarebytes vs avast

- Open task manager shortcut win 10

- Free software convert flac to wav

- Filtering mac address xbox one

- Iphone data recovery for mac

- Itunes music player for pc

- Konica minolta 2400w driver for windows 7

- Microsoft outlook 2013 free download for windows 8-1 64 bit

- Songs pk a to z mp3 song download

- China mobile video player free download

- List of email providers

- Logisticregression python jupyter notebook

- Reasonable doubt jay z album

- Mp4 to mkv video converter free

- Silicon labs cp210x usb to uart bridge driver moonlander

- Install weka packagfes

- Finance app for mac

- Pioneer woman mac and cheese fancy

- Mac finder window not showing shared

- Rename files on mac terminal

- Dum laga ke haisha full movie download 720p

- Kung fu yoga movie full songs of free download

- Mac os x mavericks dmg

- In the deep movie review

- Sonic 1 megamix v4 0

- Kodak printer driver for windows 10

- Syx binary editor

- Battle of gods english dub torrent

- Truecaller app voicemail

- 8gb vs 16gb ram gaming benchmark

- The sims 4 reloaded afiygirl

- Mac what is xquartz

- How to set outlook as default mail client windows 7

- How to find mac specs by serial number

- Denoiser iii error

- Free download facetime app for iphone

scatter ( X, X, c = Y, edgecolors = "k", cmap = plt. Paired ) # Plot also the training points plt. c_ ) # Put the result into a color plot Z = Z. Jordan Crouser at Smith College for SDS293: Machine Learning (Spring 2016). 154-161 of 'Introduction to Statistical Learning with Applications in R' by Gareth James, Daniela Witten, Trevor Hastie and Robert Tibshirani.

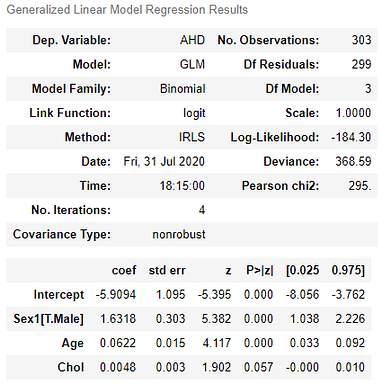

LOGISTICREGRESSION PYTHON JUPYTER NOTEBOOK CODE

Code source: Gaël Varoquaux Modified for documentation by Jaques Grobler License: BSD 3 clause. The datapoints are colored according to their labels. arange ( y_min, y_max, h )) Z = logreg. This lab on Logistic Regression is a Python adaptation from p. Show below is a logistic-regression classifiers decision boundaries on the first two dimensions (sepal length and width) of the iris dataset. max () + 0.5 h = 0.02 # step size in the mesh xx, yy = np. For that, we will assign a color to each # point in the mesh x. fit ( X, Y ) # Plot the decision boundary. logreg = LogisticRegression ( C = 1e5 ) logreg. target # Create an instance of Logistic Regression Classifier and fit the data. data # we only take the first two features. # if they are between 0.5 and 1, they will be considered 1Ĭm = np.histogram2d(actual_values, pred_values, bins=bins)Īccuracy = (cm+cm)/cm.# Code source: Gaël Varoquaux # Modified for documentation by Jaques Grobler # License: BSD 3 clause import numpy as np import matplotlib.pyplot as plt from sklearn.linear_model import LogisticRegression from sklearn import datasets # import some data to play with iris = datasets. # Create a histogram, where if values are between 0 and 0.5 tell will be considered 0

#Predict the values using the Logit model # this is the variable where you have the fitted model # In the case of a logistic regression, it should be a single column with 0s and 1s # These are the actual values from the test_data # data is a data frame formatted in the same way as your input data (without the actual values) Sm.LogitResults.predict() returns the values predicted by our model.ĭef confusion_matrix( data,actual_values,model): Check if the model is significant and the variable is significant.Different models will have completely different an incomparable pseudo r-squares.this measure is mostly useful for comparing variations of the same model.A good pseudo r-squared is somewhere between 0.2 and 0.4.you may want to compare the log likelihood of your model with the LL-Null to see if your model has any explanatory power.the log-likelihood of a model which has no independent variables.Value of the Log-Likelihood is usually negative.The bigger the likelihood function, the higher probability that our model is correct.# ^ Output will tell the function value and Iterations. Reg_log = sm.Logit(y,x) # We use sm.Logit P ( Y ) = e ( β 0 + β 1 x 1 + ⋯ + β k x k ) 1 + e ( β 0 + β 1 x 1 + ⋯ + β k x k ) p(Y)=\frac) l o g ( o d d s 1 o d d s 2 ) = coef_of_x1 ( 1 − 0 ) (1 - 0) ( 1 − 0 ) = coef_of_x1īy taking exponent you can find the difference of o d d s 1 odds_1 o d d s 1 and o d d s 2 odds_2 o d d s 2 using numpy. Logistic regression predicts the probability of an event occurring.We can use logistic regression to predict Yes / No (Binary Prediction) On real world problems often require more sophisticated non-linear models.Ī logistic regression implies that the possible outcomes are not numerical but rather categorical.Į.g. If a regression assumption is violated, performing regression analysis will yeild an incorrect result.

We should consider them before performing Logistic regression analysis.